What You Need to Know About Deepfakes (and How to Safeguard Your Reputation from Them)

Fake videos and images have been around for as long as photography and films have existed. Forgeries have entertained and deceived, and since the inception of the internet, this has only increased in popularity.

However, today’s fake images go beyond skilled videographers or Photoshoppers. Deepfakes have given rise to a new breed of synthetic media. This involves creating images, videos, and audio through deep-learning algorithms.

This can create convincing footage of anyone doing anything, anywhere. Hence understanding this technology and learning to safeguard yourself from it should be the top priority on your to-do list.

Here, we’ll go through what deepfakes are, their purpose, and what the solution might be.

Sections

- What is a deepfake?

- How are deepfakes made?

- How do you spot a deep fake?

- Can deepfakes impact your reputation?

- How to safeguard your reputation

- Is there a positive side to deep fakes?

What is a deepfake?

Deepfakes are usually videos where a person’s face is replaced by a computer-generated image resembling another individual. The ‘deep’ stands for deep learning. This uses artificial intelligence algorithms called neural networks to create incredibly convincing fakes.

The practice first came to prominence in 2017 on Reddit, when the online community r/deepfakes shared pornographic videos featuring deepfakes of various female celebrities. The celebrities had no part in creating these videos, but the techniques used meant they were more convincing than regular altered images, and the subreddit was eventually banned.

Through online algorithms, however, this deepfake content quickly went viral. These algorithms work similarly to online personalized recommendations, analyzing user profiles and interests to suggest popular content that aligns with the browser’s interests.

Deepfakes may be used for any number of reasons, such as revenge porn, political attacks, spoofs, parodies, or satire. This type of negative content is often cited for take-down requests and removal from search results.

More than videos

Deepfakes gained popularity as videos, but photos and audio can also be deepfaked. Deep-learning algorithms can create synthetic media to replicate voices and edit pictures to be impressively realistic.

This can have incredibly damaging consequences. For example, fraudsters have previously used AI to mimic a CEO’s voice. In this case, the head of a UK-based energy firm thought he was speaking over the phone to his boss, the chief executive of the German parent company. The voice was believable enough to make the CEO transfer $243,000.

The CEO only thought it was suspicious when he received a call for a second payment. This is a worrying tale in a world filled with remote team communication, where voice calls for business transactions are commonplace, necessary, and not always synchronous.

How are deepfakes made?

Deepfake techniques have improved over the years. Researching deepfake tools is like researching ‘what is hosted PBX’. There are several solutions available. One such technique uses autoencoders with generative adversarial networks (GAN).

A GAN pits two AI algorithms against each other: a generator and a discriminator. This allows machines to learn rapidly and create more sophisticated synthetic media. They’re like an artist and a critic, constantly competing and pushing one another to improve.

The generator (artist) is fed random noise, which it turns into an image. The discriminator (critic) is fed reference images of the person being deepfaked. The discriminator looks at the generated image and decides if it’s a deepfake or not.

The generator attempts to create an image to fool the discriminator. Once it does, the discriminator uses the information it’s gathered to improve its image-analyzing capabilities, and the cycle starts all over again.

In this way, the image-creating abilities of GANs improve dramatically in a short period, leading to a realistic and flawless form of synthetic media. Having extensive datasets improves this process.

However, while AI has many uses, especially in the business world, where it can be used for email filters, process automation, or predictive analysis to boost conversion rates, it is limited by hardware.

Deepfake AIs run for hours or days to create an accurate video, photo, and audio fake. Computing power is, therefore, an essential consideration when making them. High-powered desktops or cloud services are required to prevent performance bottlenecks. If these fail, so does the system generating them.

Who’s making deepfakes?

After hearing all the malicious ways deepfakes are used, you may be surprised to learn that they’re often created by academic and industrial researchers, visual effects studios, and amateur enthusiasts. As we’ll see later, there are positives to deep fakes.

Governments are also likely dabbling with deepfake technology. Deepfakes are linked to misinformation. Knowing how they work and understanding how to identify them is essential to discredit and disrupt any ill intent.

How do you spot a deepfake?

Poor-quality deepfakes are easy to identify. Bad lip-syncing, glitchy facial movements, and general inconsistencies are all signs of a deep fake rendered video or image. For audio, irregular tone or rhythm can inform you it’s a fake.

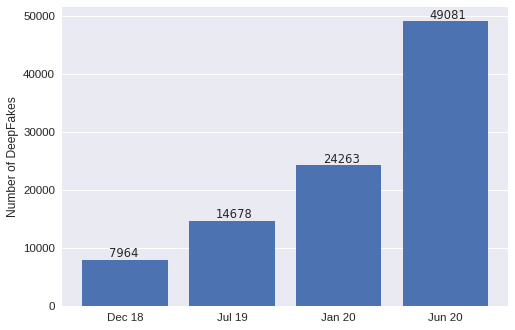

Identifying higher-quality deepfakes is more difficult. Advancements in technology coupled with freely available media mean more realistic versions are fast being developed.

Nevertheless, there are a few things deepfakes don’t always get right. AI struggles with lighting, skin tones, and fine details like hair. Inconsistencies in these areas can be telltale signs of deepfake rendering. Identifying audio deepfakes depends on the tone of voice. Everybody has a different rhythm and way of speaking, so irregularities stand out. For example:

- Jewelry might be inconsistently illuminated

- Skin tones may be patchy

- Irises will have weird reflections

- Audio may have abrupt sentence endings and beginnings or be overly monotonous or pitchy

When it comes to figuring out if a piece of media is a deep fake, look for the discrepancies – the things that should or shouldn’t be there. Deepfake media is created from multiple references and sources, so there’s always something odd.

What’s the solution?

While AI has supercharged malicious deepfakes, it’s also the answer to the problem. It can be used to identify deepfakes, but its current abilities are hampered.

Most deepfakes are based on celebrities, who have thousands of video, audio, and image datasets readily available. This makes their deepfakes almost perfect.

There is, however, hope for the future. The Deepfake Detection Challenge is working with research teams worldwide to boost detection methods.

Although you can spot deep fakes by their inconsistencies, it could be some time before the most high-quality ones are eradicated.

Can deepfakes impact your reputation?

You may be reading this and thinking you’re safe because the only thing you use the internet for is finding out ‘what is an IP phone system’. After all, unless you’re running in a presidential election or famous beyond compare, nobody’s going to deep fake you, right?

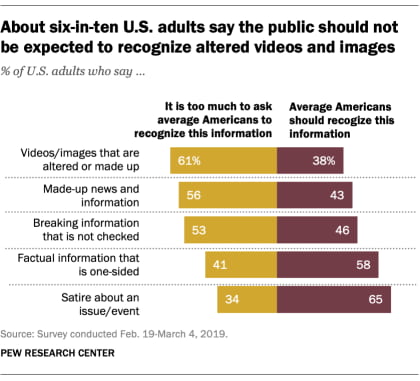

As technology advances, its impact is moving from those at the top to regular citizens. Many deep-fakers are already doing this. All they need is data in the form of images, audio, or video.

Today, sharing videos and photos is paramount to building a personal and professional brand. With multiple social channels all promoting ways to post visual content, it’s easier to share a deep fake or for bad actors to obtain data ripe for faking.

How to safeguard your reputation

If someone wanted, they could show you doing and saying anything they liked. It could be a parody, offensive gesture, or promoting a competitor’s products. A viral video, soundbite, or image could negatively impact your reputation and that of your brand on a large scale.

Sharing deepfakes can additionally damage your credibility, trust, and reputation. This makes understanding how to spot harmful synthetic media imperative.

Always consider the authenticity of the images you post and share. If you’re not sure, check. Otherwise, you could cause irreparable damage to your brand or someone else’s.

Think about the apps and services you use. Are they trustworthy? Are they storing facial data securely?

Be upfront and transparent about your brand so deepfakes are easily distinguished. Social media sites will take down reported deepfake posts and, if necessary, you can use a personal reputation management company to help protect yourself.

Is there a positive side to deep fakes?

While deep fakes can be used to harass, demean, and undermine individuals, not all deep fakes are malicious. Creators can also utilize them for entertainment i.e. to appear in their favorite films or create comedic impersonations.

In visual effect studios and movies, such as Star Wars and Marvel, deepfakes have de-aged actors to look like their younger selves, allowing for enhanced storytelling. They can also improve dubbing in foreign films or recondition old, deteriorated footage.

Another use is improving the user experience. For example, the Salvador Dali Museum in Florida has a deep fake of the surrealist painter where he introduces his art and takes selfies with visitors.

Entertainment isn’t the only beneficial application for deepfakes. Diseases such as ALS can take away a person’s ability to speak. Using this technology, voices can be cloned for everyday use via augmented communication devices.

Audio reproduction solutions like voice cloning often use the cloud to handle and scale intensive AI tasks. You can even look for cloud solutions to reproduce your voice.

Deepfakes are here to stay

It’s not unusual to read an article about viral deepfakes or log onto social media and see a funny gif made using the algorithms. As technology and deepfake AI improve, the resulting images, videos, and audio will become increasingly indistinguishable from reality. With ever-growing datasets also freely available online, anyone could become a target of malicious intent.

Deepfake media is identified by its inconsistencies. When it comes to safeguarding your reputation, consider what you post online and be upfront and transparent about your brand. You can take legal action against deep fakes, and social media sites will take down reported deep fake posts. Reputation management companies can help make the process smoother.

However, not all deepfakes are harmful. There are growing and legitimate uses among visual effect studios, museums, and medical purposes. As laws catch up, and social media companies take action to eliminate deep fakes made with ill intent, these will flourish.

While you can resolve many other issues negatively impacting your business, for now, the deepfake community is here to stay. Educating yourself on how to combat them is your most effective defense against becoming the next influential figure to fall victim.

Richard Conn is the Senior Director for Demand Generation at 8×8, a leading communication platform with an integrated contact center, predictive dialer, and IP phone system. Richard is an analytical & results-driven digital marketing leader with a track record of achieving major ROI improvements in fast-paced, competitive B2B environments.

Tags: Online Reputation Repair, Reputation Management, Reputation Protection.